Advancements in Distribution Grid Monitoring Enable Improved Voltage Control for Utilities

PUBLISHED ON Mar 04, 2020

Utilities face increased voltage volatility from distributed renewables-based power generation.

The power distribution grid is undergoing unprecedented levels of change. The traditional one-way model of voltage regulation presumed voltages dropping predictably along feeders from substation to customers. However, the proliferation of larger scale Distributed Energy Resources (DER) along feeders is rendering traditional models and regulation techniques incapable of maintaining delivered voltages within ANSI C84.1 guidelines.

This is spurring new approaches in grid measurement, monitoring and control that provide real time measurements that enable Distribution Management applications to better manage voltages and maintain high power quality.

Voltage Fluctuation from DER

The traditional power delivery model pushes electricity from a centralized power generation plant through distribution feeders to the point of consumption. Power is consumed along the line with utilities using tap changers, voltage regulators and capacitor banks to regulate voltage to ensure delivery remains within an ANSI guideline range of +/- 5% all the way to the end of the line. Historically, the key concern was ensuring voltages did not fall below or above these standards.

Enter DER, electricity-producing resources or controllable loads that are connected to a local distribution system. DER can include solar panels, wind turbines, battery storage, generators and electric vehicles.

These points of power generation inject power along the distribution feeder, which may increase or decrease voltage levels outside ANSI guidelines. In other words, increasing integration of renewables means variable load and generation fluctuations which work against the constant voltage profile model.

In addition, solar and wind DER are, by nature, intermittent. Managing unpredictable intermittency without measurement, monitoring and control is even more difficult and may result in oscillatory voltages in the system. Voltage rises at injection points may also create reverse systemic power flow.

As a result, utilities require more advanced power monitoring and control systems that can precisely and quickly measure voltage to enable their Distribution Management Systems (DMS) to respond and regulate the voltage on their feeder lines. But this means DER integration needs real-time data to implement their control strategies.

“The issue goes beyond simply burnt toast in a home,” says Ray Wright, VP Product Management, Power Products at NovaTech. “What we are concerned about with unpredictable voltage delivery is the disruption of service to household, commercial and industrial customers all along the feeder, including damage to motors and equipment and interruption of service.”

More Precise Monitoring and Control

The challenge of effectively controlling unpredictable, variable and potentially bi-directional voltage flow starts with measurement. The only way to control this kind of variability is to have measurements along distribution feeder lines that are accurate and that can communicate data to control systems fast enough to modulate the voltage and keep it under control. Essentially – in real-time.

Voltage delivery monitoring and control can be the domain of DMS. These systems have evolved over the years with advanced DMS models now in use that use Volt/Var optimization (VVO) where capacitor banks, voltage regulators and solid-state systems are switched on and off to maintain acceptable levels of power factor and voltage. More recently, Distributed Energy Resource Management Systems (DERMS) have emerged in response to the increasing amount of renewables-based distributed energy resources. These are complex control systems for monitoring and controlling sources of energy.

“DERMS requires accurate, real-time measurement of voltages, loads, reactive power, fault data, and even weather data” says Wright. “A key consideration has been how to design and install these monitoring systems in a way that is cost-effective for utilities. This has called into question the traditional approach of grid monitoring with conventional magnetic current transformer (CT) and potential transformer (PT). The installed cost of CTs and PTs is expensive and time-consuming, plus the feeder must be powered down for their installation.”

An alternative lower-cost approach is to employ low voltage (0-10V ac) sensor technology for all voltage and current measurements. These sensors are safe, accurate for all required measurements and can be installed without taking an outage.

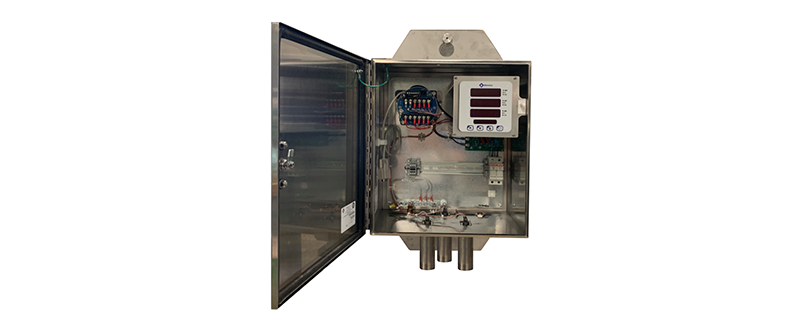

Three raw voltages and currents can be wired to a Bitronics Distribution Grid Monitor (DGM), a pole-top measurement system, and dozens of useful measurements made including voltages to better that .5%, loads, power factor, real and reactive power. An ANSI 51 overcurrent element enables reporting of fault pickup and peak fault currents. All these measurements are reported to DMS and DERMS through DNP3 over radio.

“Initial DGM deployment at a New England utility drove further DGM enhancements,” says Wright. “This includes new measurements for ‘Normalized Voltage’ to accommodate sensor readings instead of PTs and CTs, additional surge suppression and ‘safety shields’ to prevent tampering of cable connectors. This major project was driven by a utility commission mandate to accurately measure and report end-of-line voltages.”

Given that the trends indicate DER integration will increase significantly each year, so too will the need to maintain voltages, power factor and frequency within desired limits. New grid measurement and monitoring technologies are essential to keep these factors under control.